Issue #6581

closedPulpcore delete_orphans took too long

Description

Recently I ran delete_orphans on pulpcore-3.0.1-2 and it took 15 hours, which is quite a bit longer than it takes with Pulp 2. Pulp 2 took just under 3 minutes.

My Katello setup is as follows:

Repos: 72 BusyBox (Docker) 51 Alpine (Docker) 51 Bash (Docker) 72 Large File (70,000 synced units) 1 Very Large File (150,000 synced units) 5 File with 10 uploaded files each

Content views: 1 Large View with 100 versions: 90 versions with all Docker repos 5 versions with all Docker repos plus Large File 5 versions with only Large File Another view with 10 versions: All Busybox only 100 Little Views with 1 version each: All Docker repos

Files

Updated by fao89 over 4 years ago

Updated by fao89 over 4 years ago

- Triaged changed from No to Yes

- Sprint set to Sprint 71

Updated by iballou over 4 years ago

Updated by iballou over 4 years ago

When I hit this issue, I had just migrated from Pulp 2. Pulp 2 - 3 migration is still sort of a tech preview feature, so I think we'll be safe by ignoring the migration. It might cause unneeded headaches considering the content syncing takes a while too.

Instructions for creating a reproducer environment:

-

Setup Forklift: https://github.com/theforeman/forklift along with the DNS steps (if you want).

-

In your Forklift 99-local.yaml, add the following:

centos7-katello-3.15: box: centos7-katello-3.15 cpus: 8 memory: 32768

-

Run

vagrant up centos7-katello-3.15and thenvagrant ssh centos7-katello-3.15 -

Follow these steps to improve the Katello server's tuning: https://projects.theforeman.org/issues/29370#note-5

-

Navigate to https://<your VM's hostname> and make sure the Katello server is up and running

-

Clone my scripts repo: https://github.com/ianballou/katello-performance-scripts

-

Run

migration_performance_test --setup. It will likely take a number of hours due to the amount of content. Note that I had troubles syncing many repositories at the same time with Pulp 2, so it's mostly serial syncs. You might want to run this overnight. -

Run

migration_performance_test_tier_2 --setup. This script won't take nearly as long as the first one. -

Check the foreman tasks monitor to ensure all the tasks passed successfully. Double check that all the content is properly synced and that all of the content views exist.

-

To try orphan cleanup, run

foreman-rake katello:delete_orphaned_contentor just do it through the Pulp 3 API.

Updated by dalley over 4 years ago

Updated by dalley over 4 years ago

That is excellent information Ian, it should be extremely helpful in reproducing this.

I had a couple of different theories about why this might be slow, and possibly they're all true at once:

-

The orphan detection queries could be slow by virtue of checking the PKs against a list of >100,000 PKs https://github.com/pulp/pulpcore/blob/master/pulpcore/app/tasks/orphan.py#L17-L19

-

We have no index on the "pulp_type" field, and we're performing filters on it: https://github.com/pulp/pulpcore/blob/master/pulpcore/app/tasks/orphan.py#L20

-

We're using synchronous I/O to delete files one by one https://github.com/pulp/pulpcore/blob/master/pulpcore/app/tasks/orphan.py#L47-L48

Updated by ttereshc over 4 years ago

Updated by ttereshc over 4 years ago

Just to confirm that even on a small setup it takes longer than expected. I had 2 RPM repositories, centos8 baseod kickstart and fedora32. That's it, no other repositories, no other content. Both repos were synced with on_demand policy, so only metadata files are on the fs. I removed both repos and ran orphan clean-up which took 3.5 minutes (removed 57230 content units and 13 artifacts).

Updated by dalley over 4 years ago

Updated by dalley over 4 years ago

- Status changed from ASSIGNED to POST

Updated by dalley over 4 years ago

Updated by dalley over 4 years ago

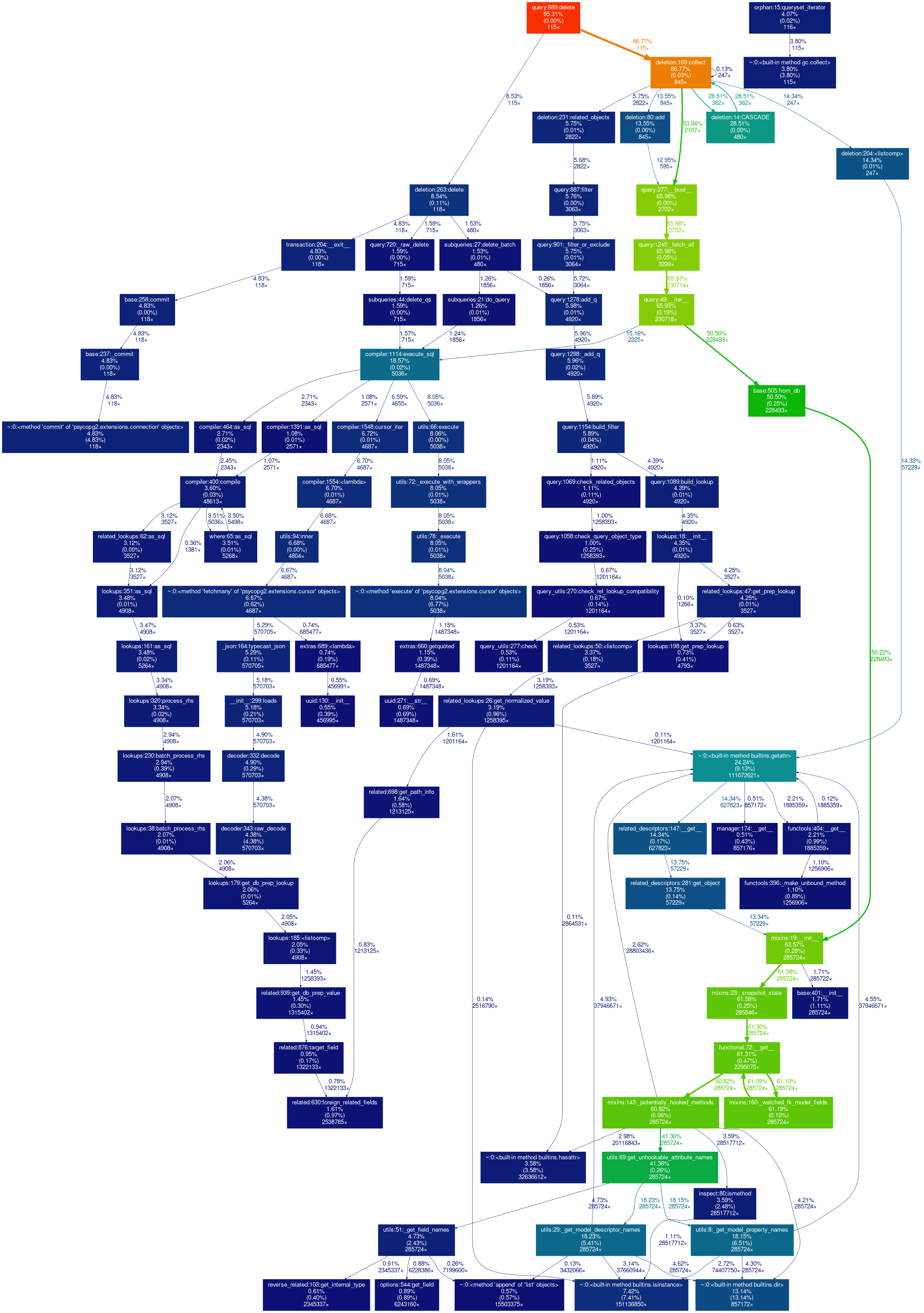

- File orphan_profile2.png orphan_profile2.png added

The PR linked improves performance by about 40%-ish and improves memory consumption. I don't really think that is enough of an improvement to close this issue, but I also don't see an easy way to improve it further. It looks like django-lifecycle used by RBAC is incurring some overhead here (65%?) but I'm not sure that can be easily rectified (Brian agreed).

Brian has some ideas about preventing it from blocking other tasks, which should help mitigate the practical issues of it taking a long time, but that's technically a separate issue. I'm going to send this one back to NEW and remove it from the sprint since it was mitigated without a full fix (and without one forthcoming).

Updated by dalley over 4 years ago

Updated by dalley over 4 years ago

- Status changed from POST to NEW

- Assignee deleted (

dalley) - Sprint deleted (

Sprint 81)

Added by dalley over 4 years ago

Added by dalley over 4 years ago

Updated by dalley over 4 years ago

Updated by dalley over 4 years ago

- Status changed from NEW to MODIFIED

- Assignee set to dalley

Updated by pulpbot over 4 years ago

Updated by pulpbot over 4 years ago

- Status changed from MODIFIED to CLOSED - CURRENTRELEASE

Improve performance of orphan cleanup slightly

Also memory consumption.

re: #6581 https://pulp.plan.io/issues/6581